Internship Projects

More project will be announced soon!!

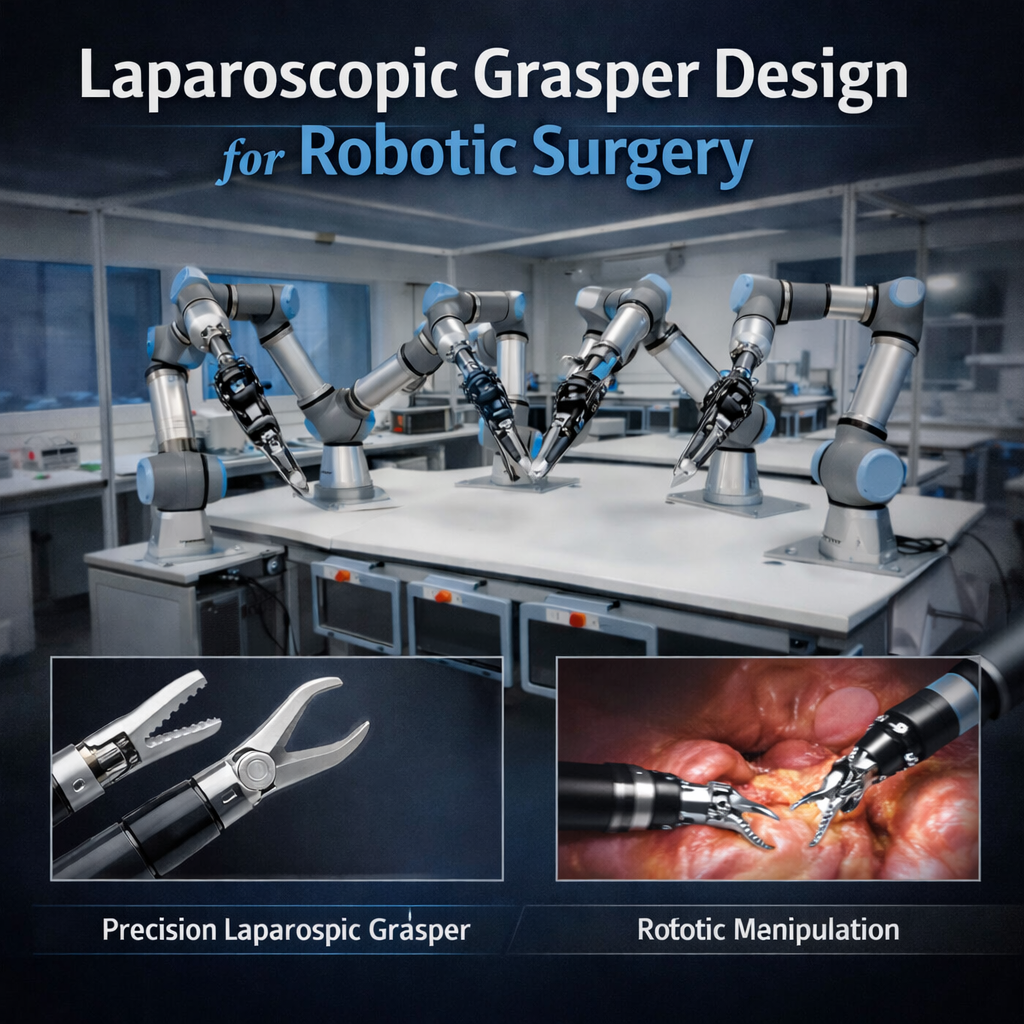

Laparoscopic Grasper Design for Robotic Surgery

This project focuses on building a high-precision robotic grasper module for our surgical suite. You will bridge the gap between clinical tools and advanced robotics by robotizing two medical-grade graspers and designing a custom 6-DOF actuator to achieve unparalleled manipulative precision.

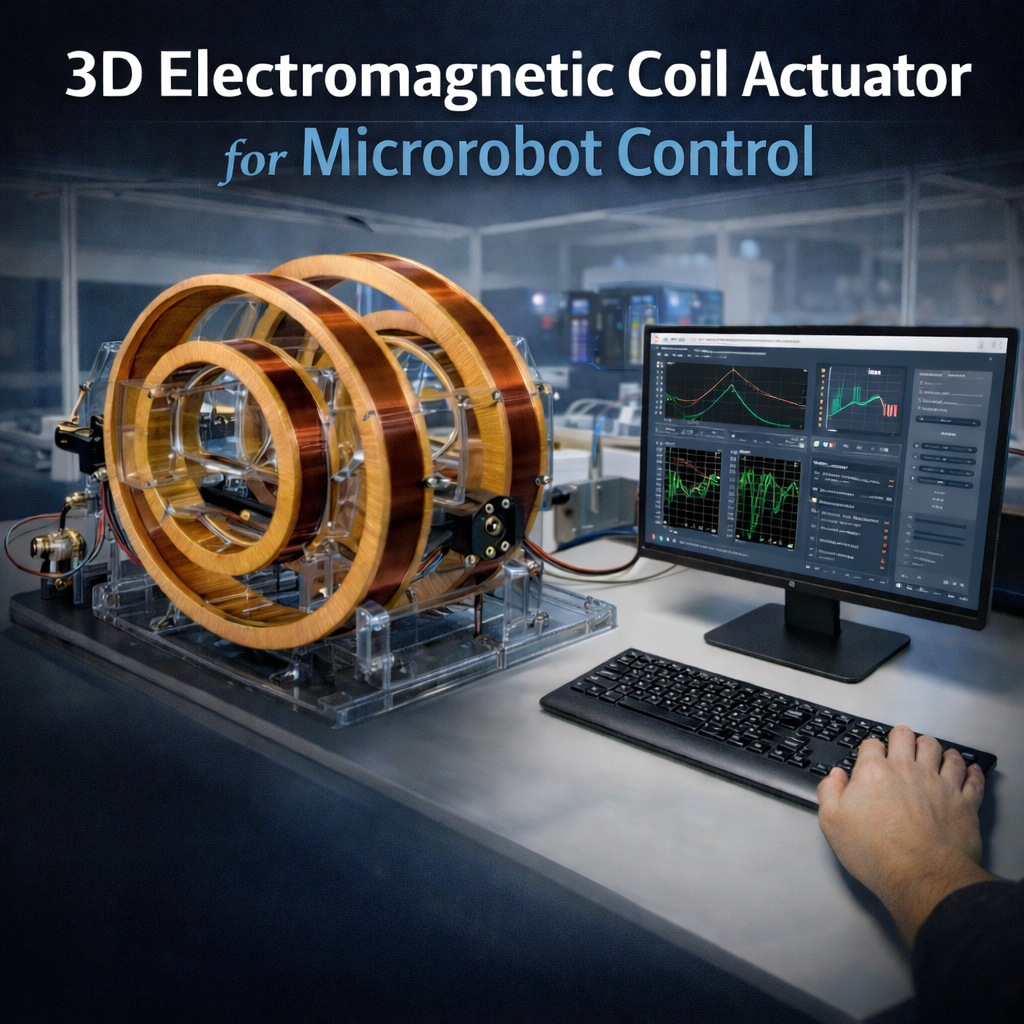

3D Electromagnetic Coil Actuator Microrobot Control

This project focuses on the design and development of a 3D Helmholtz coil system for precise magnetic actuation of microrobots. You will model, simulate, and manufacture multi-axis electromagnetic coils capable of generating controlled and uniform magnetic fields in three dimensions. Following hardware development, you will design and implement a real-time control interface to regulate field strength and direction, enabling accurate microrobot manipulation and trajectory control within a defined workspace.

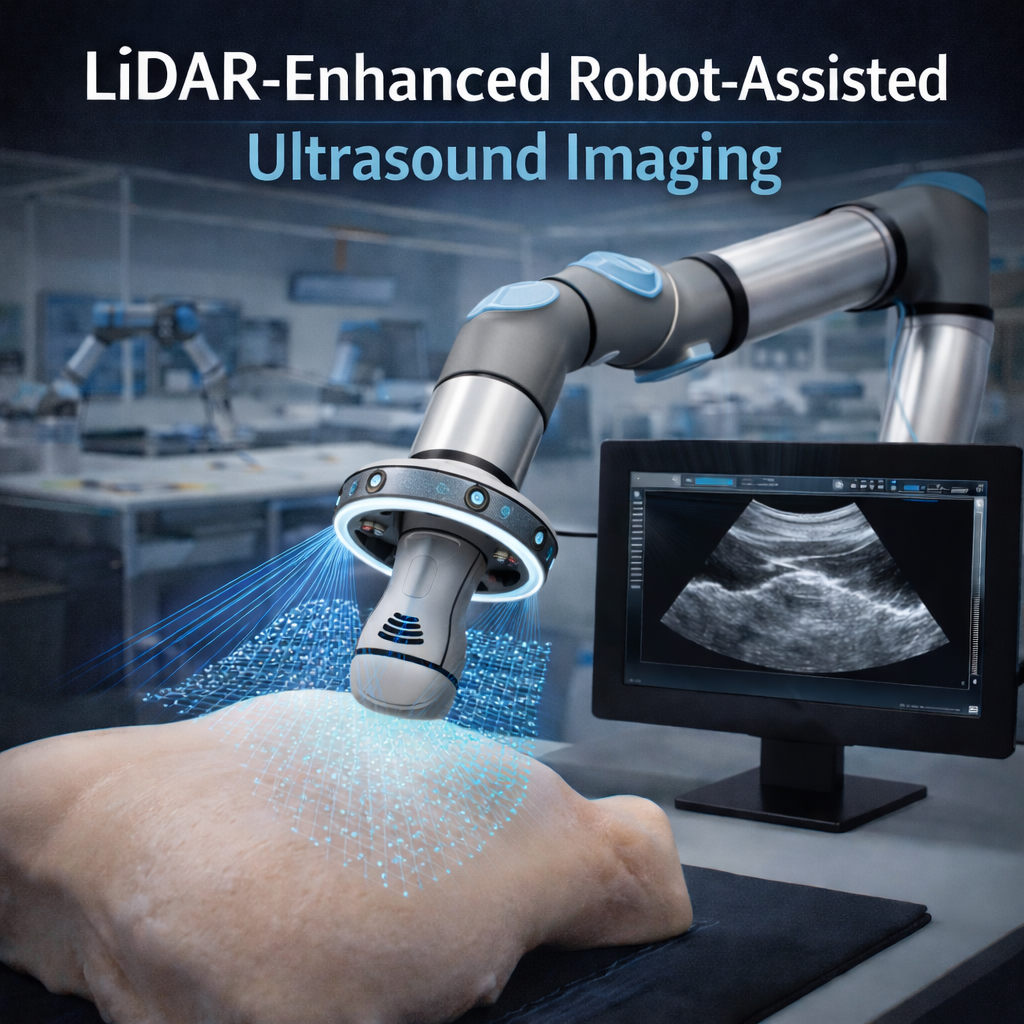

Lidar for Robot-assisted Ultrasound Imaging

This project investigates integrating LiDAR sensing into robot-assisted ultrasound systems to improve spatial awareness and surface reconstruction. You will design and integrate a compact LiDAR module onto a robotic manipulator to acquire real-time 3D surface data of the patient’s anatomy. In parallel, you will develop surface mapping and registration algorithms that fuse LiDAR-based geometry with ultrasound data, enabling more accurate probe positioning, consistent contact control, and improved image quality during robotic scanning.

Concentric-tube Actuator Design

This project will explore various concentric-tube actuation methods for a robotic endoscope system. You will design a transmission system for a concentric tube actuator, prototype the actuator, and test its performance.

Pixels in Space: Turning Sparse Rover Photos into 3D Maps

Imagine exploring distant planets through the eyes of a rover, where every image you capture tells a story about an alien landscape. Our project brings computer vision and robotics together to reconstruct 3D scenes from ultra-low frame-rate rover images, pushing the limits of energy-efficient planetary navigation. By working with us, you’ll turn sparse visual data into meaningful maps and motion insights, directly contributing to future space exploration missions. Join our team to innovate at the frontier of robotics, vision, and discovery, and see your code guide robots across unexplored terrains.

GTA: Virtual Streets, Real Intelligence (Game to Actual Footage)

Imagine turning simple game footage into not only hyper-realistic cities but also real cities where robots can safely learn to navigate. Our project uses cutting-edge transfer learning to bridge the gap between virtual worlds and real-world scenarios, creating rich training data without expensive sensors or risky field tests. By joining us, you’ll transform pixels from games and movies into intelligent datasets that train autonomous systems and push the boundaries of AI. Dive into a space where computer vision, robotics, and creativity meet, and see your code come to life in both virtual and physical worlds.

Signal to Motion: Building a Real-Time Angle-Aware UWB Tracking System for Robotic Vehicles

Imagine developing an indoor positioning system that works where GPS fails and transforms raw UWB signals into precise, real-time cooperative vehicle tracking. In this project, you will design an angle-aware multi-UWB architecture, implement advanced sensor fusion with IMU data, and validate accuracy beyond conventional trilateration methods. Your algorithms will be deployed on robotic vehicles and tested in real experiments, evaluating tracking performance, motion consistency, and traversability across challenging indoor layouts. If you are passionate about signal processing, embedded systems, robotics, and hands-on experimentation, this is your opportunity to build and test a full-stack localization system from hardware to intelligent navigation.

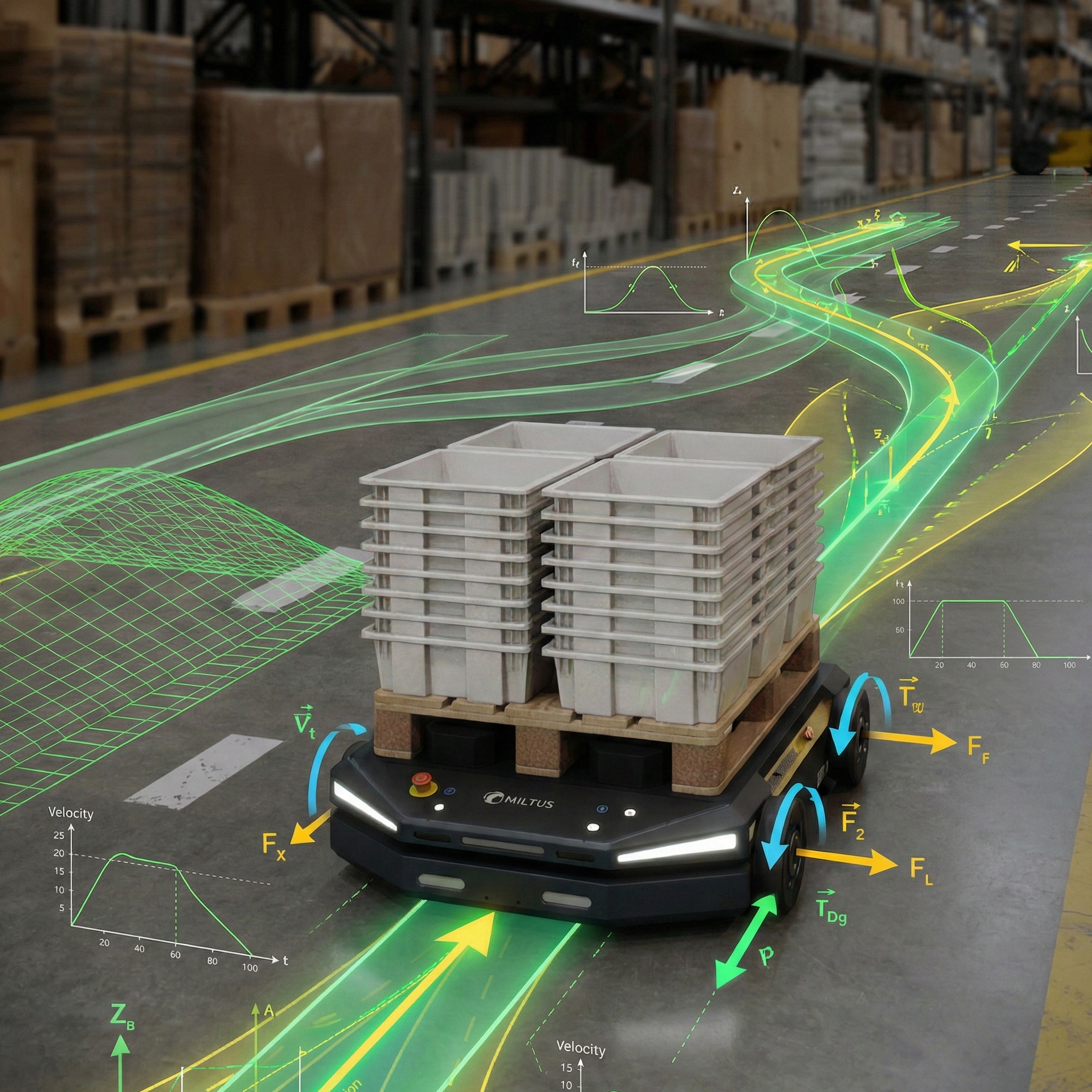

Multi-Modal AI Sensor Fusion for Autonomous Mobile Robot SLAM

This project focuses on advancing the spatial awareness and autonomous navigation capabilities of a mobile robotic platform operating in dynamic warehouse environments. You will integrate advanced AI based perception into a SLAM pipeline that fuses multi-modal sensor data. A core focus of your work will be deploying state-of-the-art deep learning models to enhance real-time perception and obstacle avoidance. You will validate the developed system using offline datasets and simulation environments, followed by real-world deployment on a physical robot.

Motion Planning and Control for Autonomous Mobile Robot Navigation

This project focuses on developing robust state estimation, navigation, and control systems for autonomous mobile robots. You will bridge the gap between theory and real-world deployment by gaining high-level architectural insights into industry-proven mobile robot stacks. You will apply advanced control theory to manage complex vehicle dynamics, developing trajectory generation algorithms that deliver smooth, collision-free, and highly precise navigation.

Perception and Object Pose Estimation for Robotics

In this project, you will research and develop methods for robotic perception in real-world industrial settings. Using computer vision and machine learning, you will work on detecting objects and estimating their precise 3D position and orientation from camera and LiDAR data. A core challenge is robustness: the robot must operate reliably under adverse conditions, including poor lighting and cluttered, unstructured environments. You will gain hands-on experience designing experiments, benchmarking algorithms, and iterating on models that hold up under demanding real-world conditions.

Planning, Control, and Coordination of Tethered Aerial-Ground Robots

This project aims to enable the fully autonomous operation of unmanned aerial and ground vehicles connected by a physical tether in rugged terrains under communication constraints. By developing force and visual feedback algorithms, the project seeks to reduce operator dependency and ensure a more reliable and coordinated execution of hazardous tasks, such as disaster response and solar panel maintenance.

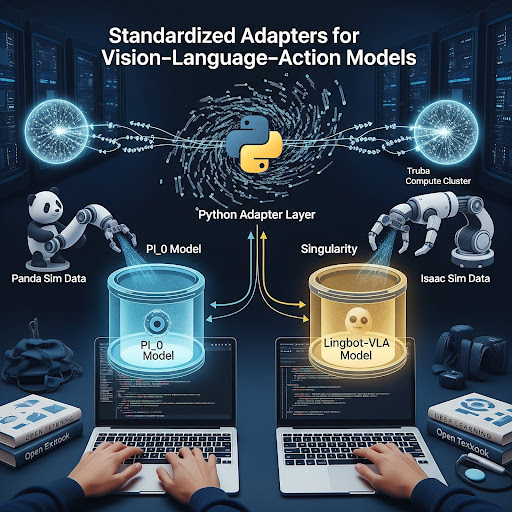

Standardized Adapters for Vision-Language-Action Models

This project focuses on building a unified software ecosystem for deploying state-of-the-art Vision-Language-Action (VLA) models like pi_0 and Lingbot-VLA. You will develop Singularity containers to resolve complex dependency conflicts and build Python adapter layers that seamlessly convert existing Panda and Isaac simulation data into model-specific schemas. By writing these critical wrappers for dataset processing and inference, you will ensure these advanced architectures run smoothly on compute clusters like Truba. This role offers hands-on experience bridging the gap between rapidly evolving AI research and practical robotic deployment.

Benchmarking VLA Models in Isaac Sim

This project focuses on building the technical simulation infrastructure required to evaluate cutting-edge Vision-Language-Action (VLA) models. You will use Python and NVIDIA Isaac Sim to construct dynamic virtual environments, extract real-time object state data, and execute core robotic manipulations. By mastering these fundamental simulator operations, you will develop the robust benchmarking pipelines necessary to assess how well modern AI models perform on complex robotic arm tasks. Ideal for students with strong Python skills and a deep curiosity for robotic simulators, this role offers a practical entry point into the intersection of AI and simulated robotics.

Automated 3D Printing Infrastructure and Component Design

This project focuses on optimizing our continuous 3D printing pipeline for Romer applications. You will develop both software and hardware solutions to manage a print queue, including engineering an automated print bed clearing system for uninterrupted manufacturing. Alongside this infrastructure work, you will spearhead the 3D design of new robotic components and iteratively improve the geometry of existing parts. Ideal for students proficient in 3D CAD design and familiar with 3D printer operations, this hands-on role bridges rapid prototyping with automated, scalable production.

Automated Data and Benchmarking Pipeline for VLA Models

This project focuses on architecting an automated data collection and performance reporting pipeline to evaluate advanced Vision-Language-Action (VLA) models. You will bridge the gap between NVIDIA Isaac Sim and the TRUBA compute cluster by engineering a robust system for seamless data extraction, systematic logging, and automated benchmarking. By developing this critical infrastructure using tools like Docker and Singularity, you will enable the rapid, at-scale assessment of state-of-the-art robotic intelligence. Ideal for disciplined 3rd or 4th-year students with strong Python, Linux/SSH, and containerization skills, this role offers deep exposure to large-scale AI and robotic simulation workflows.

Theoretical Design and Optimization of Robotic Controllers

This project focuses on evaluating and actively redesigning the theoretical foundations of our robotic control systems. You will dive deep into control theory to review existing controller architectures, identify areas for improvement, and mathematically redesign them to achieve superior performance, precision, and stability. By optimizing these fundamental control strategies, you will directly improve the physical reliability and dynamic capabilities of our robotic platforms. With this project you will transform your understanding of control theory into practical expertise, gaining hands-on experience translating mathematical models into real-world Python or C++ algorithms while mastering applied robot kinematics and dynamics.